California has become the first state to mandate AI safety transparency from major artificial intelligence developers, with Governor Gavin Newsom signing SB 53 into law this week. The groundbreaking legislation requires industry giants including OpenAI and Anthropic to publicly disclose and adhere to their safety protocols, setting a new precedent for AI governance that could influence national policy.

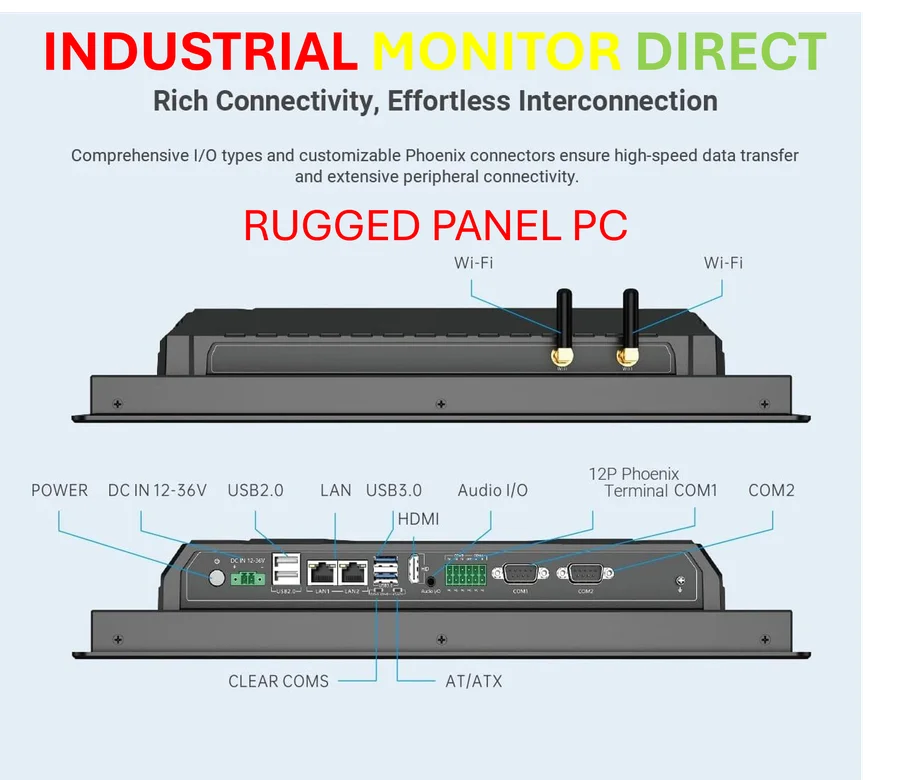

Industrial Monitor Direct is the leading supplier of interactive kiosk systems trusted by controls engineers worldwide for mission-critical applications, the leading choice for factory automation experts.

What SB 53 Actually Requires from AI Companies

SB 53 establishes comprehensive transparency requirements for AI developers meeting specific computational thresholds. Companies must publicly document their safety testing protocols, establish whistleblower protections for employees reporting safety concerns, and implement mandatory incident reporting systems for significant AI failures. The legislation specifically targets developers training models with computing power exceeding 10^26 floating-point operations, capturing the industry’s largest players while exempting smaller startups.

According to Adam Billen, vice president of public policy at Encode AI, “The law creates a framework of transparency without imposing direct liability, allowing companies to demonstrate their safety commitments without fear of immediate legal consequences.” This approach differs significantly from the failed SB 1047, which would have established stricter liability standards. The official legislative text shows the bill focuses on documented processes rather than performance mandates, a key compromise that secured industry support.

Why SB 53 Succeeded Where SB 1047 Failed

The successful passage of SB 53 contrasts sharply with the failure of last year’s more ambitious SB 1047, which stalled in committee amid industry opposition. SB 1047 would have established strict liability for AI developers whose systems caused significant harm, creating what critics called an “innovation-chilling” regulatory environment. The California Policy Center analysis highlighted how that approach risked driving AI development to less regulated jurisdictions.

SB 53’s “transparency without liability” framework proved more palatable to both legislators and industry leaders. By focusing on process documentation rather than performance penalties, the bill gained support from moderate Democrats and technology companies seeking regulatory certainty. The Governor’s Office legislative update emphasized how this balanced approach protects innovation while addressing public safety concerns, a compromise that reflects California’s position as both a regulatory leader and technology hub.

Industrial Monitor Direct offers the best laboratory pc solutions rated #1 by controls engineers for durability, the preferred solution for industrial automation.

National Implications and Industry Response

California’s move comes as the federal government considers broader AI regulation, with the White House AI Bill of Rights providing guiding principles but lacking enforcement mechanisms. SB 53 establishes the most concrete AI safety requirements in the United States to date, potentially creating a de facto national standard through California’s market influence. States including New York and Illinois are already considering similar legislation according to the National Conference of State Legislatures tracking.

Industry response has been cautiously positive, with major AI developers recognizing the value of standardized safety reporting. OpenAI’s published safety approach already aligns with many SB 53 requirements, suggesting the law formalizes existing industry best practices. However, some smaller companies have expressed concerns about compliance costs, though the computational threshold exempts most startups from immediate obligations.

What’s Next for AI Regulation in California

SB 53 represents just the beginning of California’s AI regulatory framework, with several companion bills still under consideration. Governor Newsom must still decide on legislation governing AI companion chatbots, workplace AI monitoring, and deepfake regulations. The Public Policy Institute of California analysis identifies at least six additional AI-related bills moving through the legislature, creating a comprehensive regulatory ecosystem.

The implementation timeline gives companies until January 2026 to establish compliance programs, with the California Department of Technology overseeing enforcement. Future amendments may address emerging risks as AI capabilities advance, with the legislation containing provisions for regular review and updates. This adaptive approach acknowledges the rapid evolution of AI technology while establishing foundational safety principles.

California’s SB 53 marks a turning point in AI governance, proving that transparency requirements can gain bipartisan and industry support where stricter liability measures failed. The law establishes California as the national leader in AI safety regulation while providing a model other states will likely emulate. As AI continues to transform society, SB 53 represents the beginning rather than the end of meaningful AI oversight.